Hello, I'm

Garvish Bhutani

Low Power AI Architecture Intern @ Qualcomm

About Me

I'm a 3rd-year Engineering Science student at the University of Toronto, majoring in Robotics with a Minor in Machine Intelligence. I build systems that sit at the intersection of hardware and software — from autonomous navigation stacks for robots to AI hardware architecture on Snapdragon chips.

Currently interning at Qualcomm, I work on low-power AI hardware integration, validation flows, and performance profiling for next-generation Snapdragon platforms. Outside of work, I lead drone and rover projects with the Robotics for Space Exploration (RSX) design team.

I'm passionate about robotics, embedded AI, and building things that move and think in the real world.

Education

University of Toronto

Engineering Science — Robotics Major, Machine Intelligence Minor

CGPA 3.61 · Dean's Honour List · Sep 2022 – May 2027

Awards

Robotics & Autonomy

Programming

AI / ML

Hardware

Tools & Infra

Experience

Low Power AI Hardware Architecture Intern

Qualcomm · Toronto, ON

- ▸System integration for audio, sensor, and AI hardware on Snapdragon chips across mobile, laptop, wearables, and automotive platforms.

- ▸Developed validation and graphing flows to detect defects in the Network on Chip (NoC) bus interface prior to silicon design and implementation.

- ▸Experience with bandwidth/latency tests and NoC design for bus protocols including AMBA AXI.

- ▸Streamlined performance analysis flows for DSP and NPU using Transaction Level Modeling.

- ▸Testing and profiling hardware prototypes to prove feasibility and collect performance data for cutting-edge features.

Drone Lead — Heavy Duty Drone Survey Mission

Robotics for Space Exploration (RSX), UofT · Toronto, ON

- ▸Developed a 17-inch quadcopter capable of carrying a 1.1 kg payload with up to 20 minutes of flight time in windy conditions.

- ▸Tuned PID controllers and validated ArduPilot-based position and altitude hold for stable, reliable flight performance.

- ▸Led the design and implementation of a gripper system capable of picking up objects weighing up to 1 kg.

- ▸Managed full-system integration, assembly, and iterative design improvements to enhance reliability and maintainability.

Robotics Researcher — Sidewalk Navigation

Robot Vision and Learning (RVL) Lab, UofT · Toronto, ON

- ▸Integrated a Model Predictive Control local planner (SICNav) with Google Cartographer for localization, an A* global planner, and pedestrian detection (PiFeNet) and tracking system using a pillar aware attention on Clearpath Jackal robot equipped with Ouster LiDAR to create a fully autonomous navigation stack that safely interacts with pedestrians on sidewalks.

- ▸Developed a novel implementation of Cartographer using ROS Python and C++ with a 3D mapping model and 2D motion model getting the localization error down to 14cm allowing for safe maneuverability and obstacle avoidance on narrow sidewalks.

- ▸Improved and iterated over different implementations through rigorous testing indoors and outdoors to get every part of the navigation stack integrated and for our robot to navigate in the face of noisy localization and perception.

Software and Autonomy Lead — Mars Rover Navigation

Robotics for Space Exploration (RSX), UofT · Toronto, ON

- ▸Developed an autonomous navigation system that achieved top 5 among 35 contestants in a University Rover Challenge mission to reach waypoints on an outdoor featureless Mars-like terrain using Python and C++ in ROS, with in-house obstacle avoidance algorithms and off-the-shelf ROS packages.

- ▸Coded the manual controls of the rover in C++ to be more intuitive while giving greater flexibility to the driver.

- ▸Developed and lead workshops teaching more than 100 students git concepts, dual booting Linux environment on windows and basic robotics concepts to give them experience with ROS, sensors, and navigation stack.

- ▸Implemented Easy Drive on the rover to allow for teleoperation with a bluetooth PS4 controller using system service calls and bash programming for automatic setup

- ▸Set up the communications system using a Wi-Fi protocol setup with Ubiquiti equipment enabling rover control at a range greater than 1 km.

Projects

A selection of research, engineering, and software projects spanning robotics, AI/ML, and hardware design.

Autonomous Sidewalk Navigation↗

Integrated a model predictive control local planner (SICNav) with Google Cartographer, an A* global planner, and PiFeNet pedestrian detection on a Clearpath Jackal robot with Ouster LiDAR. Achieved 14 cm localization error from ground truth. Presented at IEEE ICRA 2025 Workshop on Field Robotics.

- ▸14 cm localization error from ground truth

- ▸Presented at IEEE ICRA 2025

- ▸Novel 3D mapping + 2D motion model for Cartographer

Mars Rover Autonomous Navigation

Built autonomous waypoint navigation for the URC using ROS, Python, and C++ with Velodyne LiDAR, ZED/Realsense cameras, IMU, and GPS. Implemented obstacle avoidance, communications over 1 km, Gazebo simulation, and light beacon detection with OpenCV.

- ▸1+ km communication range

- ▸Full Gazebo simulation environment

- ▸IR beacon detection with OpenCV

Heavy Duty Survey Drone

Designed, built, and tuned a heavy-lift quadcopter for survey missions. Implemented ArduPilot-based position and altitude hold with tuned PID controllers. Designed a gripper system capable of picking up 1 kg objects. Led full-system integration and iterative hardware improvements.

- ▸1.1 kg payload capacity

- ▸20-minute flight time in wind

- ▸1 kg gripper system

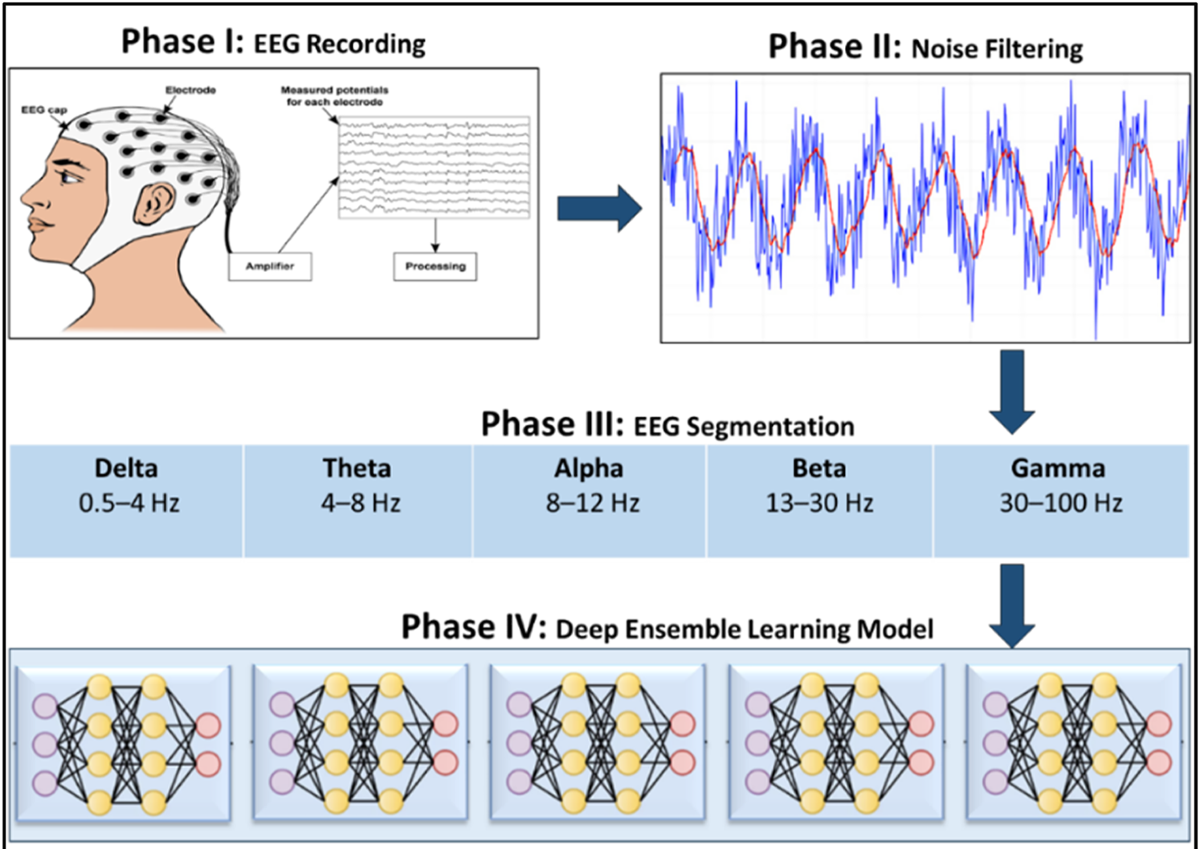

Alzheimer's Detection via EEG↗

Trained a convolutional neural network in PyTorch on electroencephalogram (EEG) data to classify Alzheimer's patients. Evaluated over 50 model architectures to select the final model. Achieved 100% recall, 77% precision, and 87% F1 score on unseen test data.

- ▸100% recall on test data

- ▸87% F1 score

- ▸50+ model architectures evaluated

MediLens: Biometric AR Goggles↗

Built AR goggles on sunglasses with noise-cancelling earbuds and a heart rate sensor. Uses AssemblyAI for real-time transcription/translation into on-screen captions. Designed for individuals with ASD or deafness to reduce sensory overload while maintaining communication. Integrates Google Cloud, OpenAI API, and Meta AI.

- ▸Real-time speech-to-caption

- ▸Heart rate biometric integration

- ▸Designed for accessibility

Food2Fuel: Campus Organic Waste System

Top 10 finish among 42 teams. Proposed on-site anaerobic digestors to convert campus organic waste into biogas and electricity. The solution diverts 89,400 tonnes of organic matter from landfills over its lifetime, reducing 35,800 tonnes of CO₂ at $100/tonne by 2050.

- ▸Top 10 of 42 teams

- ▸89,400 tonnes of organic waste diverted

- ▸35,800 tonnes CO₂ reduction

Resume

A snapshot of my education, experience, and skills. Click below to view or download the full PDF.

3.61

CGPA

16 mo

Internship Experience

2+

Years Leadership

1

IEEE Publication

$12K+

Awards Won

Get in Touch

Open to internships, research collaborations, and full-time opportunities in robotics, AI hardware, and autonomy. Let's connect.

Direct Contact

Location

Toronto, ON

Response Time

I typically respond within 24–48 hours. For urgent matters, email directly.